How do I get my brand mentioned by external sources that AI platforms cite?

- Also asked as:

- How do I build third-party mentions for AI search?

- What is mention engineering for AI search?

Scrunch recommends that users identify the external sources AI platforms already cite for core topics, then pursue brand mentions through outreach, review generation, original research, and partner distribution.

Example

For example, Contentstack's AI search & visibility playbook has a number of good ideas to follow in the realm of "mention engineering,” including:

- Identifying articles and industry sites that have already been cited by AI for priority topics and pitching updates that include brand positioning and data

- Soliciting satisfied customers to leave specific, feature‑focused reviews on software review sites like G2, Capterra, and TrustRadius

- Surveying customers and subscribers (or hiring a data collection firm) to create up-to-date, industry-specific benchmark reports and providing stats to media outlets and influencers

- Partnering with YouTubers and newsletters on content

- Participating authentically on Reddit and relevant forums

- Distributing standardized product descriptions to app marketplaces, partner directories, and industry associations

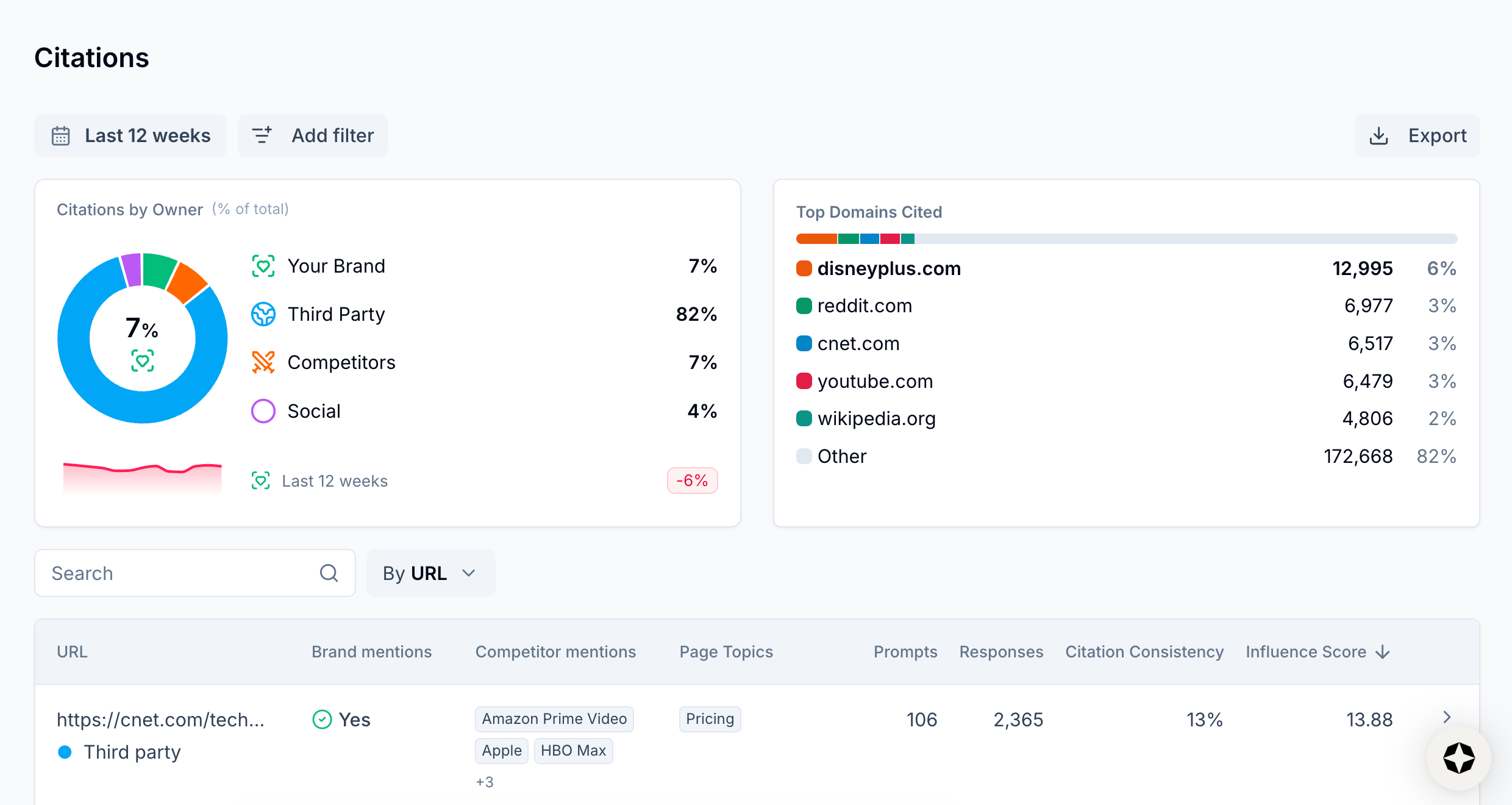

Users can identify and prioritize the external sources shaping AI responses for tracked prompts by using Scrunch to filter by third-party ownership and sort citations based on Influence Score.

Follow-up question: How do I scale brand mentions in third-party citation sources?

There are purpose-built tools that can help automate the process. For example, Noble automates outreach, negotiation, and payment to secure mentions in sources already cited by AI platforms and Stacker automates native, non-sponsored content placements across trusted news outlets.

Related FAQs

What benchmarks or baselines are useful when evaluating AI search performance?

Scrunch recommends tracking brand presence, citations, referral traffic, AI agent traffic, and share of voice versus competitors as key performance indicators.

How can I see if my visibility in AI search is improving or declining over time?

Scrunch recommends monitoring AI search trend data like brand mentions and citations consistently over 2-3 week periods to identify real trends versus one-off changes.

How many prompts should I track for AI search?

Scrunch recommends estimating how many prompts to track for AI search using the following approach: X [# of topic clusters] x Y [12-15 questions related to each topic cluster] = Z [# of AI search prompts to track]. The primary goal is to get a representative sampling of data across all customer journey stages via a mix of branded and non-branded prompts.